A leak of internal Claude Code code has made it possible to observe how one of Anthropic’s most advanced AI systems is structured. Beyond the incident itself, what matters is what it reveals about the real architecture behind modern AI tools.

The code shows that LLMs alone are not enough to reliably solve complex tasks. Performance depends on an orchestration layer that wraps the model and coordinates how it is executed, turning it into a system that can run continuously and in a controlled way.

Claude Code does not rely on a simple question and answer loop. Instead, it is built as a continuous state machine. This design allows the system to maintain context across actions, chain multiple steps of work, and handle errors without interrupting the workflow. When context limits are reached or failures occur, the system can recover its previous state and continue execution without restarting.

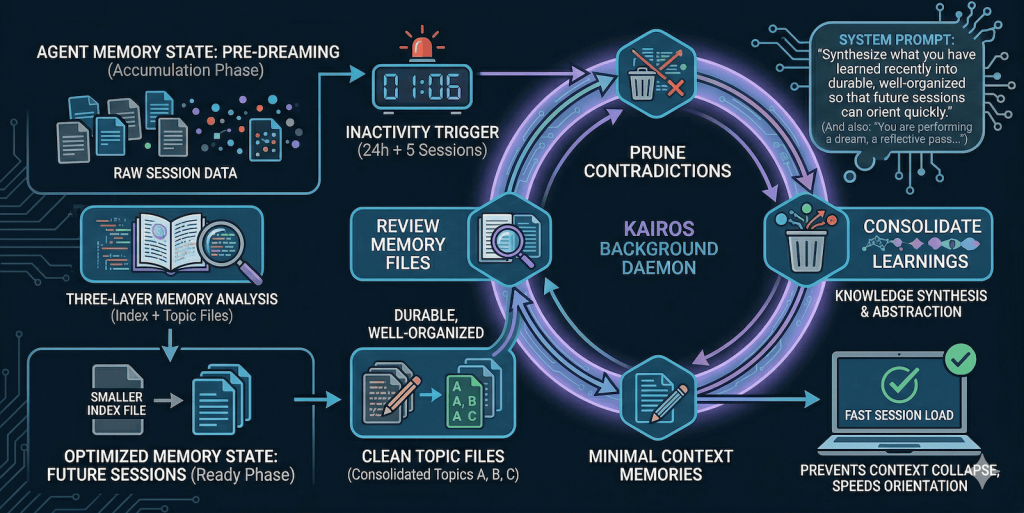

Memory management is one of the key parts of the system. It uses compression mechanisms that remove redundant information and keep a more efficient working history. On top of this layer sits KAIROS, a background process that summarizes and reorganizes the interaction history. Its purpose is to prevent the context window from filling up and to maintain consistency in long running sessions.

Without these mechanisms, model quality tends to degrade as context grows, leading to loss of coherence and broken task continuity. In practice, much of the useful behavior of the system depends on how information is prepared and filtered before it reaches the model.

Optimization also applies at the inference level. The system orders available tools before each API call, a design choice that helps stabilize the KV cache. This improves reuse of internal model states and reduces computational cost during generation, with a direct impact on response latency.

Overall, the architecture shows that the value of these systems is not only in the model itself, but in the engineering around it. The combination of orchestration, state management, error handling, and inference optimization is what allows AI systems to operate reliably in real production environments.

Technical References

- Anthropic error reveals unseen features of Claude Code, its programming assistant – Wired

- Official Claude Code documentation – Claude Code

- KV cache optimization for inference – NVIDIA Technical Blog LLM Inference

- Design patterns in AI agents – DeepLearning AI Agentic Design Patterns

- Attention and scaling laws – arXiv 1706.03762