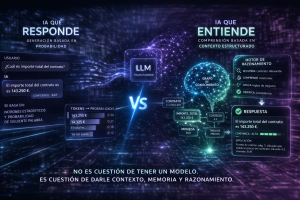

In Artificial Intelligence, most of the focus is on models, their size, the number of parameters, and the computational power they require. However, in enterprise environments, the real limit is often found in memory and how the system manages information.

The first barrier appears in short-term memory, known as the context window. This mechanism determines how much information a model can process during an interaction. When a solution needs to analyze extensive documents, contracts, histories, or multiple related files, that space fills up quickly. At that point, accuracy decreases, relevant context is lost, and responses become inconsistent.

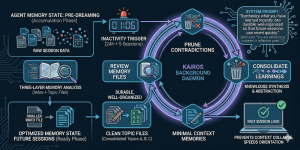

To overcome this limitation, modern architectures incorporate persistent memory. This capability allows information to be retained between sessions and provides the system with operational continuity. Thanks to this, a digital worker can remember previous interactions, relate past queries, and offer contextualized responses. Without this memory, each conversation starts from scratch and performance is significantly reduced.

It is also essential to distinguish between structured and unstructured memory. Structured memory is stored in organized databases and allows precise and fast queries. Unstructured memory includes PDF documents, emails, chats, and scanned files, which represent much of an organization’s real knowledge. Making effective use of this type of information depends on advanced technologies such as semantic embeddings, vector databases, and contextual retrieval systems.

Many AI solutions perform well in demo phases but fail as the volume of information grows. The main reason is the absence of a scalable memory strategy. When this happens, higher response times, data duplication, irrelevant searches, and loss of traceability occur.

In practice, the competitive advantage does not belong to those with the largest model, but to those who design a more efficient memory architecture. A powerful model without effective memory produces isolated responses. A model connected to a well-designed memory generates continuity, accuracy, and real operational value.

Technical References and Useful Links

- LangGraph Persistence and State – LangChain Documentation

- FAISS: A library for efficient similarity search – Meta Engineering

- Lost in the Middle: How Language Models Use Long Contexts – arXiv

- Vector Database Internals – Qdrant Documentation

- GraphRAG: Unlocking LLM Discovery – Microsoft Research

- MemGPT: Towards LLMs as Operating Systems – arXiv